ChatterUI App

Run LLMs on device or connect to various commercial or open source APIs. ChatterUI aims to provide a mobile-friendly interface with fine-grained control over chat structuring.Features:

Run LLMs on-device in Local Mode

Connect to various APIs in Remote Mode

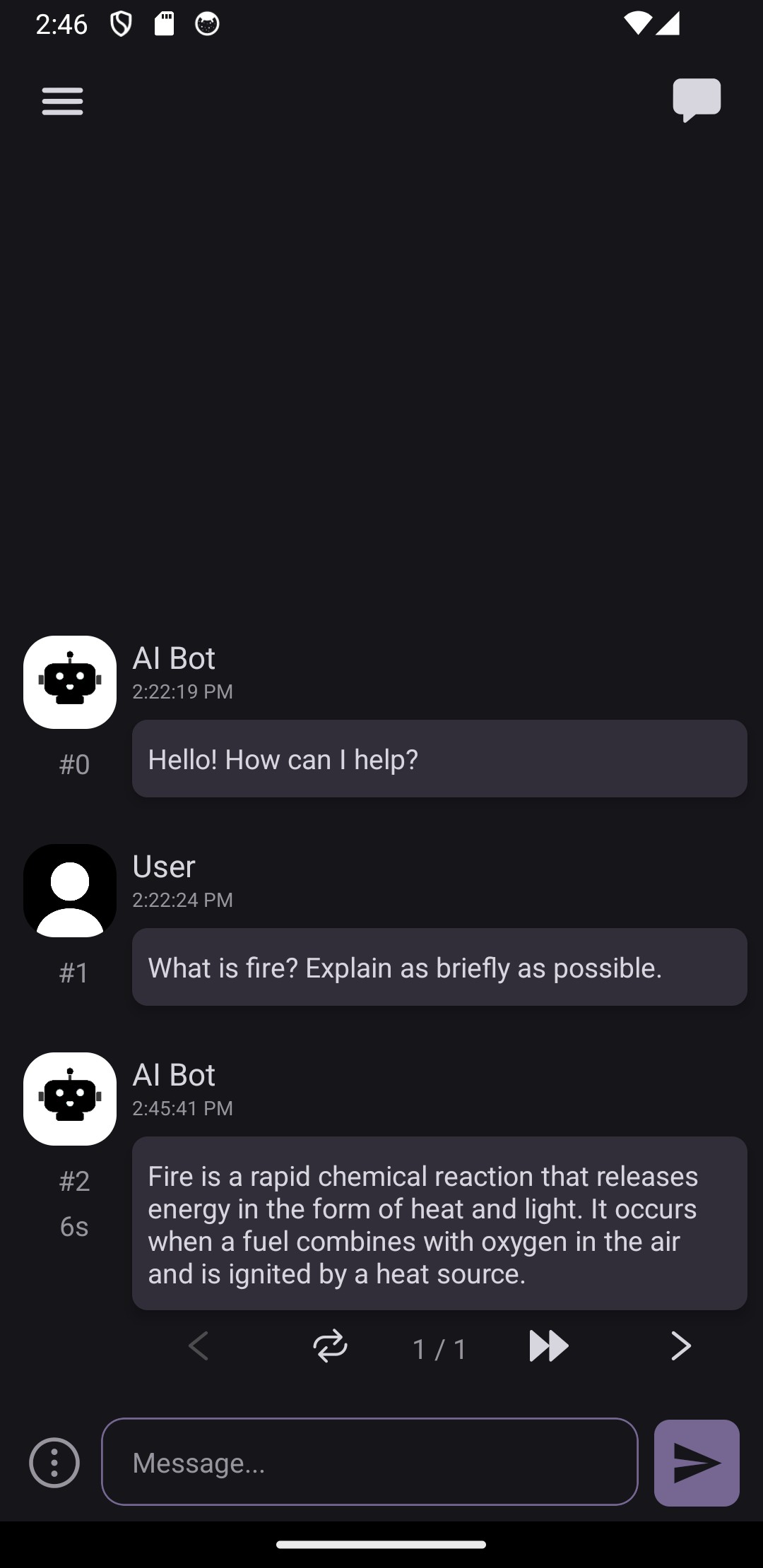

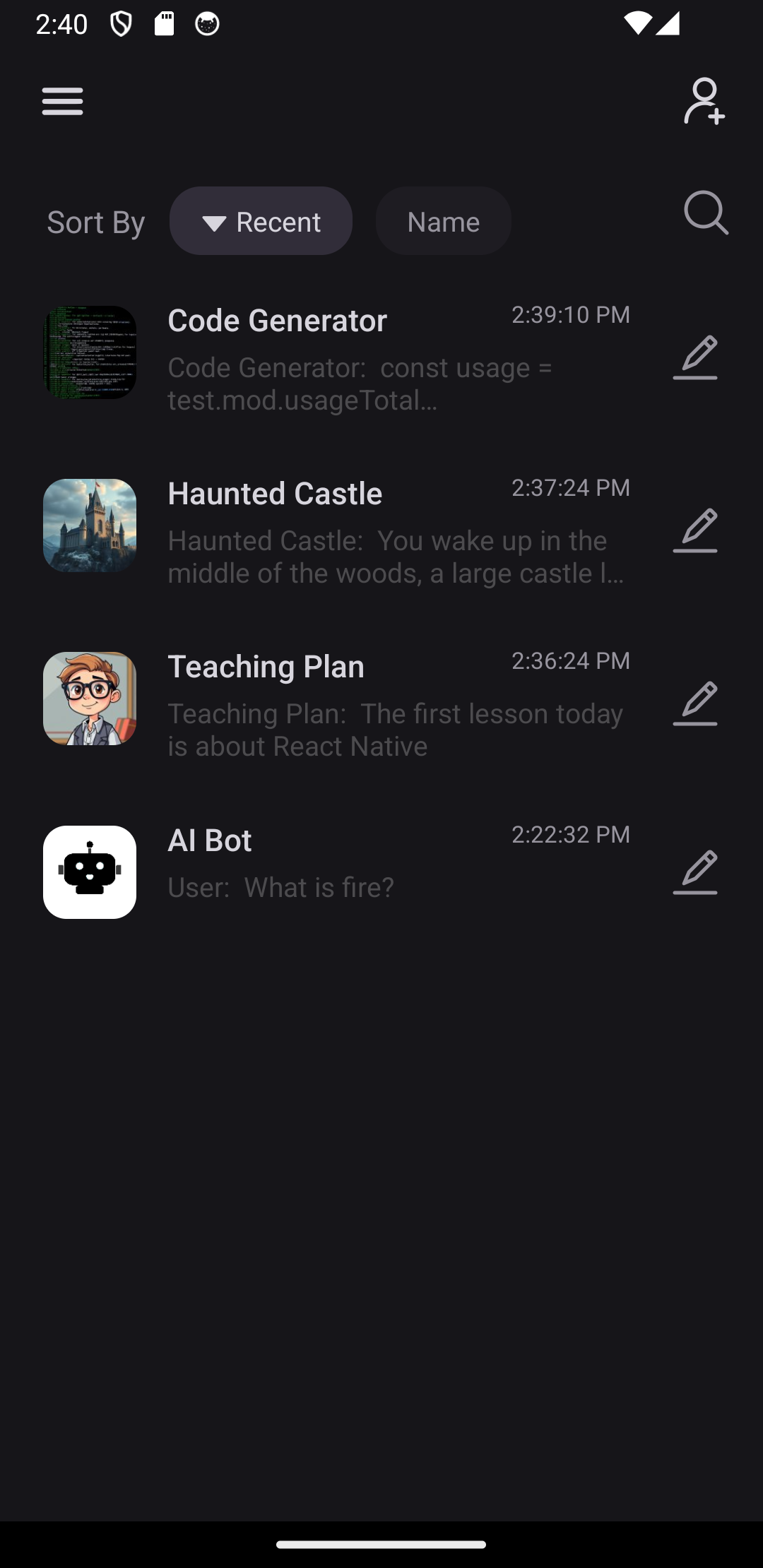

Chat with characters. (Supports the Character Card v2 specification.)

Create and manage multiple chats per character.

Customize Sampler fields and Instruct formatting

Integrates with your device’s text-to-speech (TTS) engine

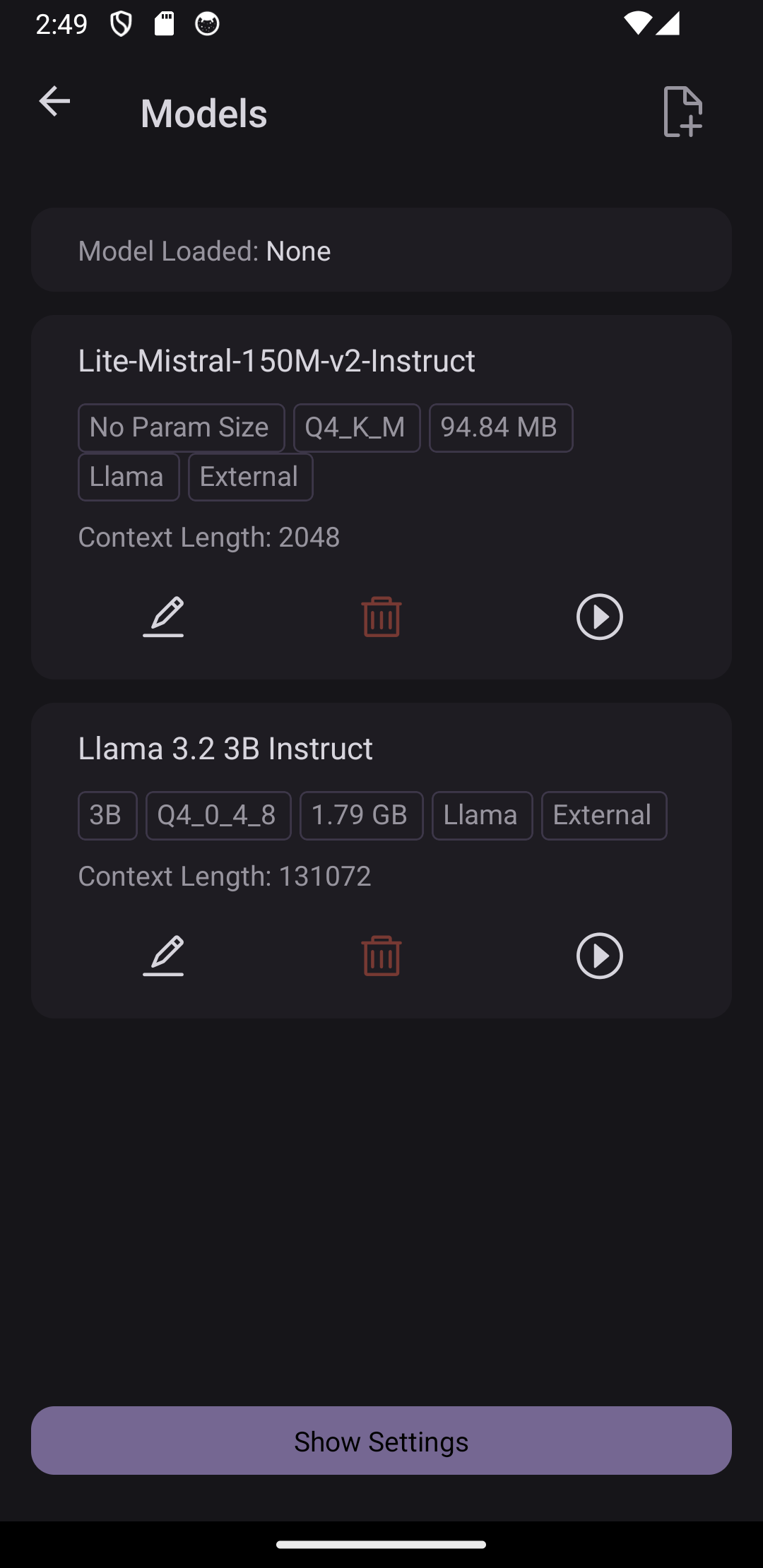

To use on-device inferencing, first enable Local Mode, then go to Models > Import Model / Use External Model and choose a gguf model that can fit on your device's memory. The importing functions are as follows:

Import Model: Copies the model file into ChatterUI, potentially speeding up startup time.

Use External Model: Uses a model from your device storage directly, removing the need to copy large files into ChatterUI but with a slight delay in load times.

After that, you can load the model and begin chatting!

Note: For devices with Snapdragon 8 Gen 1 and above or Exynos 2200+, it is recommended to use the Q4_0 quantization for optimized performance.

Remote Mode allows you to connect to a few common APIs from both commercial and open source projects.